AI-Powered Scams Targeting Seniors: How Artificial Intelligence Is Making Fraud Harder to Detect

AI-powered scams cost American seniors $352 million in 2025, with over 3,100 victims age 60+ reporting losses to the FBI. Criminals now use AI to clone voices from short audio clips, generate photorealistic faces, deploy deepfake video calls, and write flawlessly personalized phishing — erasing the warning signs older adults were once taught to spot. The best defense is a family code word, callback verification, and refusal to act under time pressure.

Last updated: May 14, 2026 · Primary sources: FBI IC3 2025 Annual Report, FTC Protecting Older Consumers (Dec 2025), DOJ Elder Justice, plus our news corpus of 1,651 elder-fraud articles.

- How AI Is Changing the Scam Landscape

- AI Scam Techniques Targeting Seniors

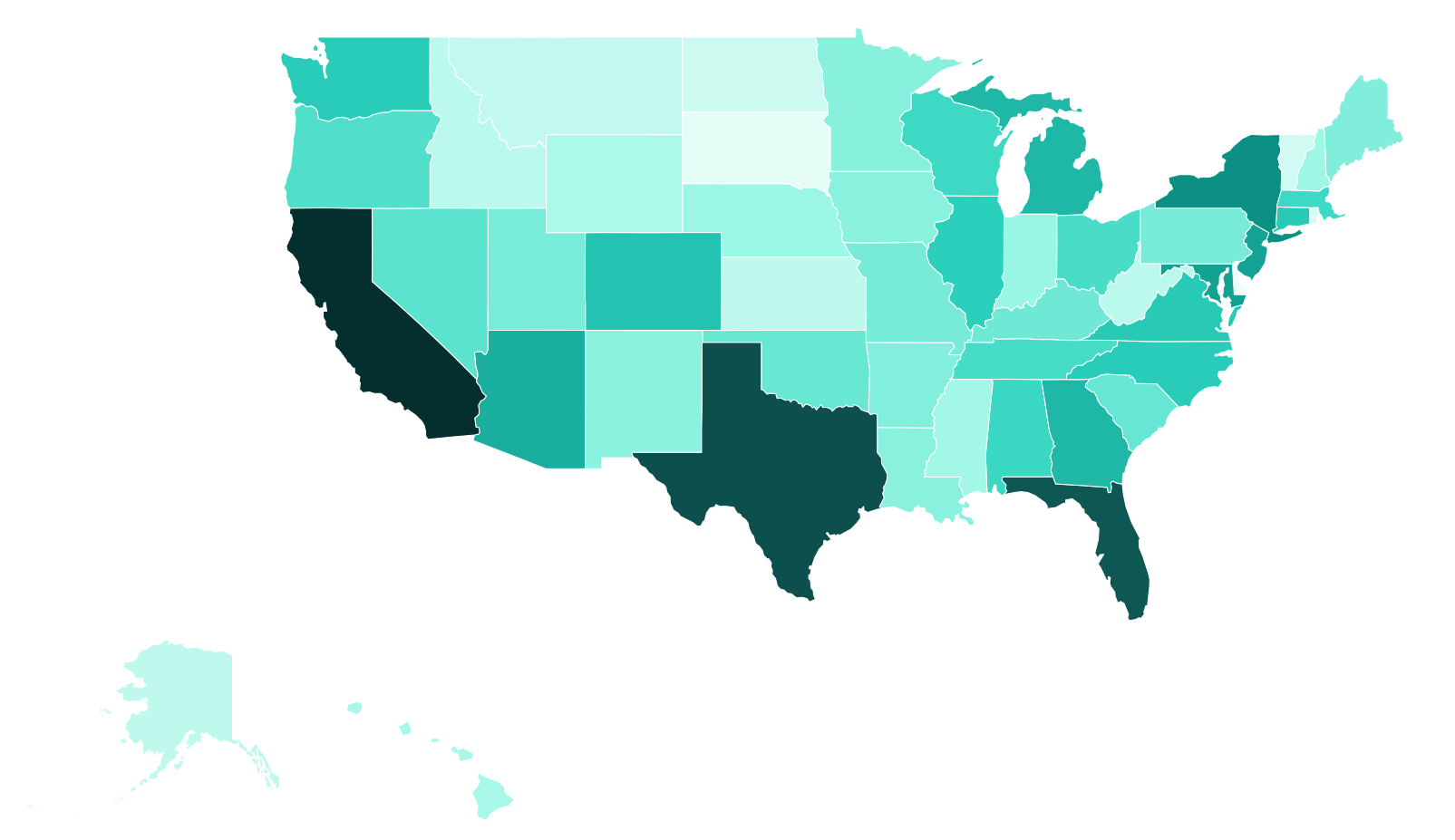

- US Heat Map — AI-Related Fraud Losses (2025)

- AI-Related Fraud Losses by State

- How To Protect Yourself From AI Scams

- AI “Digital Arrest” Scams

- AI-Enhanced “Pig Butchering” (Crypto Investment Fraud)

- AI Recovery Scams (Second-Strike Fraud)

- If You Suspect an AI-Powered Scam

Already been scammed? Read our First 24 Hours Emergency Guide for critical steps to take immediately.

2025 FBI IC3 Data: AI-related fraud cost American seniors $352 million in 2025, with over 3,100 victims aged 60+. AI is now a factor in investment scams, romance fraud, voice cloning attacks, and more. These numbers represent only reported cases — the true scale is likely far higher, as many AI-enhanced scams go unrecognized by victims.

How AI Is Changing the Scam Landscape

For decades, online scams followed familiar patterns: poorly written emails, obvious fake websites, and phone calls from strangers with suspicious accents. Seniors were taught to look for red flags like bad grammar, generic greetings, and too-good-to-be-true offers.

Artificial intelligence has erased nearly all of those warning signs.

Today’s AI tools allow criminals to clone a loved one’s voice from a few seconds of audio, generate photorealistic images of people who don’t exist, write flawless personalized emails, and even conduct real-time video calls using deepfake technology. The result: scams that are harder to detect than ever, even for tech-savvy individuals.

Seniors are disproportionately targeted because many are less familiar with AI capabilities and more likely to trust what they see and hear. A phone call that sounds exactly like a grandchild, a video call from a “bank manager,” or a perfectly worded email from “Medicare” can bypass the instincts that once protected people from fraud.

AI Scam Techniques Targeting Seniors

Below are the most common ways criminals are using artificial intelligence to defraud older adults. Click each link for a detailed guide on how the technique works, how to spot it, and how to protect yourself.

- Voice Cloning Scams — AI can clone anyone’s voice from just a few seconds of audio found on social media, voicemail, or video. Criminals use cloned voices to impersonate family members in fake emergency calls (“Grandma, I’m in jail, I need bail money”), making the classic grandparent scam virtually undetectable by ear alone.

- Deepfake Video Scams — Real-time deepfake technology lets scammers appear as someone else on a video call. Victims may believe they are speaking face-to-face with a bank official, financial advisor, or romantic interest — when the person on screen is entirely fabricated. This technique is rapidly growing in romance and investment fraud.

- AI-Generated Phishing & Smishing — AI writes phishing emails and text messages with perfect grammar, personalized details, and convincing branding. Gone are the days when typos and generic greetings were reliable warning signs. AI can craft thousands of unique, targeted messages that reference your real name, bank, or recent purchases.

- AI Romance Scams — Scammers use AI to generate photorealistic profile photos that don’t appear in any reverse image search, and deploy chatbots capable of maintaining emotionally convincing conversations around the clock. Some even use deepfake video for “dates,” making it nearly impossible for victims to detect the deception.

- AI Investment Fraud — Criminals build entirely fake trading platforms with AI-generated dashboards showing fabricated returns, create deepfake videos of celebrities endorsing investments, and use AI chatbots as “personal financial advisors.” The sophistication makes these schemes far harder to distinguish from legitimate platforms.

- AI Voice Phishing (Vishing) — AI-powered phone systems can conduct natural-sounding conversations in real time, adapting to what the victim says. These systems can impersonate bank fraud departments, government agencies, or tech support, staying on the line for extended conversations that would be impractical for human scammers to scale.

- AI “Digital Arrest” Scams — Criminals use AI to generate fake arrest warrants, court orders, and legal documents with the victim’s real personal information, then conduct deepfake video calls posing as judges or federal agents to demand immediate payment. Victims are told they face imminent arrest and are forbidden from contacting family or attorneys — a terrifying scam that is growing rapidly in 2025.

- AI-Enhanced “Pig Butchering” — The single largest fraud category by dollar loss. Criminals use AI chatbots to build trusted relationships with victims over weeks or months, then steer them into fake cryptocurrency trading platforms with AI-generated dashboards showing fabricated returns. AI has industrialized these operations: one criminal network can now manage hundreds of simultaneous victim relationships. Seniors lose the most of any age group — $4.4 billion in crypto fraud in 2025.

- AI Recovery Scams (Second-Strike Fraud) — After a victim has already been scammed, criminals come back posing as law enforcement, attorneys, or recovery firms, promising to retrieve the stolen money — for a fee. AI enables them to clone the voices of real FBI agents, generate fake official documents and court orders, and build convincing fake recovery firm websites. This is one of the cruelest forms of fraud because it targets people who are already devastated.

Compare AI Scam Types at a Glance

A scannable side-by-side reference. The defenses in the last column work across every category — the patterns differ but the response is similar.

| AI Scam Type | How It Works | What to Listen / Look For | Single Best Defense |

|---|---|---|---|

| Voice cloning | 3–30 seconds of audio from social media is enough to clone a relative’s voice for a fake emergency call. | Crying relative, urgent “send money now,” instructions involving wire transfer, gift cards, or a courier. | Family safe word. Hang up, call back at a known number. |

| Deepfake video | Real-time AI replaces a scammer’s face on a video call to impersonate a bank officer, advisor, or romantic interest. | Unwillingness to meet in person, glitchy lip sync, refusal to wave specific fingers on demand. | Require any video contact to verify identity via a second known channel. |

| AI phishing & smishing | AI writes personalized, grammatically perfect emails or texts referencing real banks, purchases, or family details. | Perfect grammar with urgent action request, link to a near-identical fake login page. | Never log in from a link in an unexpected message. Type the URL or use the app. |

| AI romance | AI-generated profile photos (no reverse-image match) plus 24/7 chatbots that maintain emotionally convincing relationships. | Refuses to meet in person, declines video, fails real-time tests, steers toward money or crypto. | If they avoid in-person meetings for weeks, assume the relationship is fraudulent. |

| AI investment fraud | Fake trading platform with AI-generated dashboards, deepfake celebrity endorsements (Musk, Buffett), AI “advisor” chatbots. | Guaranteed high returns, pressure to deposit, dashboard you can’t withdraw from. | Verify the firm at brokercheck.finra.org before any deposit. |

| AI voice phishing (vishing) | AI phone systems hold real-time natural conversations posing as bank fraud, IRS, or tech support. | Caller knows partial real info, asks you to “verify” the rest; transfer requests “for safety.” | Hang up. Call the institution at the number on the back of your card. |

| AI digital arrest | Deepfake video calls with fake judges or federal agents, AI-generated arrest warrants with your real personal info. | Threat of imminent arrest, demand for “bail” in gift cards or crypto, instructions to tell no one. | No U.S. agency operates this way. Hang up, call 911 if threatened. |

| AI pig butchering | Months-long AI-chatbot relationship that steers victim into fake crypto platform. Largest dollar-loss category. | Wrong-number text that turns warm, eventual mention of crypto trading wins, “tax” required to withdraw. | Never invest money based on someone you have only met online. |

| AI recovery scams | After a scam, criminals return with cloned FBI agent voices and fake recovery firms, demanding fees to “retrieve” stolen money. | Unsolicited contact from someone who knows you were scammed; demand for a recovery fee. | Legitimate recovery is free. Report only at ic3.gov and reportfraud.ftc.gov. |

Documented Cases of AI-Powered Elder Fraud

Three reported cases — one federal indictment, one prosecuted money-mule case, and one near-loss intercepted by police — illustrate how AI scams against older adults play out in practice.

Multi-state grandparent-scam ring — $5M+ taken, average victim age 84 (Sept. 2025)

In September 2025 the U.S. Department of Justice charged 13 people with running a grandparent-scam operation that stole more than $5 million from elderly victims across five states. The defendants — some based at a call center in the Dominican Republic — called seniors posing as grandchildren in legal trouble, then dispatched rideshare drivers to collect cash from victims’ homes. The average victim age was 84. A Better Business Bureau analyst told KFVS the ring used AI voice-cloning techniques: “Depending on how advanced the software the scammers use, [the voices] sound exactly like your grandchild.” Source: KFVS-TV / U.S. DOJ, Sept. 17, 2025.

Texas woman, 74 — $600,000 lost to AI-enhanced “Elon Musk” romance scam (Oct. 2025)

A 74-year-old Texas woman was befriended on Facebook by scammers impersonating Elon Musk. Over months of messages she sent $600,000, promised $56 million in return. A federal prosecution in Florida sentenced the money mule who laundered $250,000 of the stolen funds; restitution was ordered for the full amount. Bradenton police, through their Elder Fraud Unit, traced the impersonation back to images stolen from a former U.S. military servicewoman. Source: Bradenton Herald via Yahoo News, Oct. 27, 2025.

A 79-year-old in Regina — near-loss to a cloned granddaughter’s voice (Dec. 2025)

A 79-year-old woman in Regina received a phone call in early December 2025 from what sounded exactly like her granddaughter, crying that she had been in a car accident and arrested. She later described the voice as identical “to her mannerisms, her little ups and downs.” She nearly paid the demanded “bail” before contacting the supposed defense lawyer directly — who immediately told her she was being scammed. Police intercepted the cash-courier pickup before any money was lost. Five other Saskatoon seniors had lost a combined $45,000 to the same operation in the prior month. Source: CBC News, Dec. 12, 2025.

Have a documented case to add? Share details with our research team at [email protected].

US Heat Map — AI-Related Fraud Targeting Seniors (2025)

AI-Related Fraud Losses by State (2025 FBI IC3 Data)

Data & methodology: FBI Internet Crime Complaint Center (IC3) 2025 Annual Report, “AI Related” crime category, victims aged 60+. The “AI Related” tag is applied by IC3 when the victim or law enforcement identifies artificial intelligence as a factor in the fraud — including voice cloning, deepfake video, AI-generated text or imagery, and AI chatbots. National total: $352,496,231 in losses from 3,143 reported senior victims. State totals reflect victim residence, not the location of the perpetrator. Reported figures represent a floor: the FTC estimates only ~5% of fraud is reported to federal authorities, so the true scale is likely substantially higher. Last refreshed against source: May 15, 2026. Methodology questions: [email protected]. View all crime types on the national hub page →

| Rank | State / Territory | AI-Related Loss | Victims |

| 1 | California | $63,756,748 | 438 |

| 2 | Texas | $43,761,799 | 318 |

| 3 | Florida | $39,909,303 | 350 |

| 4 | New York | $18,873,395 | 149 |

| 5 | Maryland | $14,920,410 | 69 |

| 6 | New Jersey | $14,661,280 | 80 |

| 7 | Arizona | $12,330,602 | 97 |

| 8 | Michigan | $10,573,170 | 61 |

| 9 | Georgia | $10,472,324 | 71 |

| 10 | Colorado | $8,839,714 | 71 |

| 11 | Virginia | $7,817,992 | 94 |

| 12 | Connecticut | $7,758,718 | 23 |

| 13 | North Carolina | $7,433,724 | 97 |

| 14 | Washington | $7,430,022 | 95 |

| 15 | Illinois | $6,921,395 | 66 |

| 16 | Alabama | $5,543,555 | 38 |

| 17 | Massachusetts | $5,342,268 | 54 |

| 18 | Wisconsin | $5,209,036 | 25 |

| 19 | Tennessee | $4,777,382 | 57 |

| 20 | Ohio | $4,577,837 | 67 |

| 21 | Oregon | $4,134,965 | 79 |

| 22 | Nevada | $3,558,425 | 61 |

| 23 | South Carolina | $2,963,896 | 43 |

| 24 | Oklahoma | $2,868,742 | 26 |

| 25 | Kentucky | $2,598,602 | 30 |

| 26 | Missouri | $2,265,508 | 57 |

| 27 | Pennsylvania | $2,256,035 | 81 |

| 28 | Utah | $2,180,149 | 37 |

| 29 | Maine | $1,951,474 | 9 |

| 30 | Arkansas | $1,908,569 | 22 |

| 31 | Minnesota | $1,646,354 | 27 |

| 32 | Louisiana | $1,614,679 | 24 |

| 33 | Iowa | $1,578,967 | 22 |

| 34 | New Mexico | $1,518,562 | 16 |

| 35 | Puerto Rico | $1,418,968 | 7 |

| 36 | Indiana | $1,164,075 | 33 |

| 37 | Nebraska | $1,068,561 | 11 |

| 38 | New Hampshire | $992,587 | 11 |

| 39 | Mississippi | $868,559 | 15 |

| 40 | Hawaii | $725,974 | 23 |

| 41 | Wyoming | $642,935 | 3 |

| 42 | West Virginia | $335,000 | 10 |

| 43 | Idaho | $328,004 | 30 |

| 44 | Kansas | $296,123 | 16 |

| 45 | Alaska | $290,620 | 8 |

| 46 | Montana | $232,519 | 50 |

| 47 | North Dakota | $135,000 | 1 |

| 48 | Vermont | $87,290 | 5 |

| 49 | Rhode Island | $48,061 | 1 |

| 50 | District of Columbia | $7,612 | 2 |

| 51 | South Dakota | $7,000 | 5 |

| 52 | Delaware | $35 | 3 |

How To Protect Yourself From AI-Powered Scams

- Establish a family code word. Agree on a secret word or phrase with close family members that only you would know. If someone calls claiming to be a relative in distress, ask for the code word before sending any money.

- Verify by calling back. If you receive a suspicious call, hang up and call the person directly using a phone number you already have — not the number that called you. This defeats both voice cloning and caller ID spoofing.

- Be skeptical of video calls from strangers. Deepfake video technology is now accessible to criminals. A “bank official” or “romantic interest” who only communicates via video may not be who they appear to be. Ask to meet in person or verify through official channels.

- Don’t trust perfect communication. AI has eliminated the grammar mistakes and awkward phrasing that once helped identify scams. A flawlessly written email or text is no longer proof it’s legitimate.

- Reverse image search isn’t enough. AI can generate entirely new faces that won’t appear in any search results. If an online contact’s photo returns zero matches, it doesn’t mean the person is real — it may mean the photo was AI-generated.

- Never act under time pressure. Regardless of how convincing the contact appears — voice, video, or text — legitimate organizations and family members will give you time to verify. Urgency is the scammer’s most powerful tool.

- Limit personal information online. AI tools scrape social media for voice samples, photos, personal details, and relationship information. Consider making accounts private and limiting what you share publicly.

- Talk to someone you trust. Before acting on any unexpected request for money or information, discuss it with a family member, friend, or advisor. Scammers deliberately isolate their victims.

If You Suspect an AI-Powered Scam

The most common modern AI-imposter scheme is the Phantom Hacker scam — a three-phase impersonation that has stolen over $1 billion from US seniors. For a list of federal prosecutions in elder-fraud cases, see our DOJ Elder Fraud Prosecutions Tracker.

- Report it to the FBI’s Internet Crime Complaint Center (ic3.gov) — specifically mention if AI, voice cloning, or deepfakes were involved

- Contact your local police and your state Attorney General’s office

- Notify your bank or financial institution immediately if money was transferred

- Report to the FTC at reportfraud.ftc.gov

- Save all evidence: screenshots, call logs, emails, recordings if possible

Remember: AI has made it possible for scammers to sound like your family, look like a trusted professional, and write like a legitimate organization. The best defense is no longer spotting obvious fakes — it’s building verification habits that work even when the deception is perfect.

Frequently Asked Questions About AI Scams Targeting Seniors

What is an AI-powered scam?

An AI-powered scam uses artificial intelligence tools — voice cloning, deepfake video, generative text, or image generators — to make fraud appear more legitimate. Common variants include cloned-voice ‘grandparent’ calls, deepfake video bank calls, AI-written phishing emails with perfect grammar, and AI-generated romance partners. The FBI tracks AI as a separate factor in elder fraud and reported $352 million in age 60+ losses for 2025.

Can AI really clone someone’s voice from a short audio clip?

Yes. Several consumer and underground tools can produce a convincing voice clone from 3 to 30 seconds of source audio. Scammers find samples on social media posts, voicemail greetings, YouTube uploads, or family videos. The cloned voice can then be used in real-time phone calls. Family safe words are the strongest defense — a code phrase only relatives know — because no AI can generate something it has not heard.

How do I know if a phone call is AI-generated?

Listen for emotional flatness, unusual pauses, slightly off pronunciation of unfamiliar names, and an unwillingness to answer specific questions only the real person would know. If anything feels off, hang up and call the person back at their known number — do not use any number the caller provides. This single habit defeats both voice cloning and caller-ID spoofing.

What is the AI voice cloning grandparent scam?

Criminals clone a grandchild’s voice from social media audio and call grandparents claiming the grandchild is in jail, in a car accident, or stranded abroad. The cloned voice tearfully begs for money to be wired immediately. Variants include claiming a ‘lawyer’ or ‘bail bondsman’ will call next. Hang up, verify directly with the grandchild at their known number, and report the call to ic3.gov, specifically mentioning AI voice cloning.

How do scammers use deepfake video to defraud seniors?

Real-time deepfake software allows a criminal to appear on a video call as a bank executive, financial advisor, or romantic interest. The technology now runs on a standard laptop. Common use cases include impersonating bank fraud-department staff during ‘safe-account’ transfer scams and posing as a partner in long-distance romance fraud. If someone refuses to meet in person or share a verifiable phone number, treat the video call as suspect.

How much did AI scams cost American seniors in 2025?

The FBI Internet Crime Complaint Center (IC3) recorded $352 million in losses from over 3,100 victims age 60+ where artificial intelligence was a factor in the crime. This is widely understood to be a floor, not a ceiling — many AI-enhanced scams are not recognized as AI-related by victims at the time of reporting, so the true scale of AI fraud against seniors is likely substantially higher.

What should I do if I think my parent was scammed by AI?

Act fast. Stop any pending payments or wire transfers, contact the bank and any transfer service used, change passwords on email and banking, and preserve every screenshot, voicemail, and message. Report the fraud at ic3.gov (mention AI specifically), reportfraud.ftc.gov, and your state Attorney General’s office. Our First 24 Hours Emergency Guide walks through the full sequence.

What is an AI ‘digital arrest’ scam?

A digital arrest scam uses AI-generated fake arrest warrants, court documents, and deepfake video calls with people posing as judges, federal agents, or prosecutors. Victims are told they face imminent arrest unless they pay ‘bail’ immediately and are forbidden from contacting family or attorneys. No U.S. law-enforcement agency operates this way — they will never demand payment over video. Hang up and call 911 if you feel threatened.

Are AI romance scams common in 2026?

Yes — AI romance scams have escalated sharply since 2024. Criminals use AI-generated profile photos that fail reverse-image search and deploy chatbots capable of holding emotionally convincing conversations 24/7. Some operations use deepfake video for ‘first dates.’ If an online partner refuses any in-person meeting, fails simple real-time tests (asking them to wave a specific number of fingers), or steers quickly toward money or crypto, assume the relationship is fraudulent.

How can I protect an older relative from AI scams?

Agree on a family code word that everyone memorizes. Set a callback protocol: any unexpected request for money triggers a callback to a known number. Make social-media accounts private to limit voice and photo samples available to scammers. Talk openly about specific scam patterns — naming what to expect reduces the shock factor scammers exploit. Our family caregiver guide provides a complete monitoring and conversation framework.

View the 2025 FBI Elder Fraud national data | Find your state Attorney General | Emergency: First 24 Hours Guide